Facebook’s little mistakes have big consequences, too. (But only for us.)

This article is part of the On Tech newsletter. Here is a collection of past columns.

In a Facebook group for gardeners, the social network’s automated systems sometimes flagged discussions about a common backyard tool as inappropriate sexual talk.

Facebook froze the accounts of some Native Americans years ago because its computers mistakenly believed that names like Lance Browneyes were fake.

The company repeatedly rejected ads from businesses that sell clothing for people with disabilities, mostly in a mix-up that confused the products for medical promotions, which are against its rules.

Facebook, which has renamed itself Meta, and other social networks must make tricky judgment calls to balance supporting free expression while keeping out unwanted material like imagery of child sexual abuse, violent incitements and financial scams. But that’s not what happened in the examples above. Those were mistakes made by a computer that couldn’t handle nuance.

Social networks are essential public spaces that are too big and fast-moving for anyone to effectively manage. Wrong calls happen.

These unglamorous mistakes aren’t as momentous as deciding whether Facebook should kick the former U.S. president off its website. But ordinary people, businesses and groups serving the public interest like news organizations suffer when social networks cut off their accounts and they can’t find help or figure out what they did wrong.

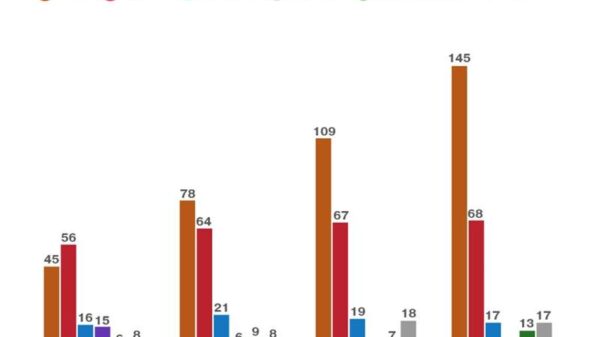

This doesn’t happen often, but a small percentage of mistakes at Facebook’s size add up. The Wall Street Journal calculated that Facebook might make roughly 200,000 wrong calls a day.

People who research social networks told me that Facebook — and its peers, although I’ll focus on Facebook here — could do far more to make fewer mistakes and mitigate the harm when it does mess up.

The errors also raise a bigger question: Are we OK with companies being so essential that when they don’t fix mistakes, there’s not much we can do?

The company’s critics and the semi-independent Facebook Oversight Board have repeatedly said that Facebook needs to make it easier for users whose posts were deleted or accounts were disabled to understand what rules they broke and appeal judgment calls. Facebook has done some of this, but not enough.

Researchers also want to dig into Facebook’s data to analyze its decision making and how often it messes up. The company tends to oppose that idea as an intrusion on its users’ privacy.

Facebook has said that it’s working to be more transparent, and that it spends billions of dollars on computer systems and people to oversee communications in its apps. People will disagree with its decisions on posts no matter what.

But its critics again say it hasn’t done enough.

“These are legitimately hard problems, and I wouldn’t want to make these trade-offs and decisions,” said Evelyn Douek, a senior research fellow at the Knight First Amendment Institute at Columbia University. “But I don’t think they’ve tried everything yet or invested enough resources to say that we have the optimal number of errors.”

Most companies that make mistakes face serious consequences. Facebook rarely does. Ryan Calo, a professor at the University of Washington law school, made the comparison between Facebook and building demolition.

When companies tear down buildings, debris or vibrations might damage property or even injure people. Calo told me that because of the inherent risks, laws in the U.S. hold demolition companies to a high standard of accountability. The firms must take safety precautions and possibly cover any damages. Those potential consequences ideally make them more careful.

But Calo said that laws that govern responsibility on the internet didn’t do enough to likewise hold companies accountable for the harm that information, or restricting it, can cause.

“It’s time to stop pretending like this is so different from other types of societal harms,” Calo said.

Before we go …

-

Losing touch with the world: A volcanic eruption and tsunami destroyed Tonga’s communications lines and made it difficult for outsiders to know what was happening in the Pacific island nation or coordinate emergency help, my colleague Natasha Frost reports. MIT Technology Review also looks at what it will take to repair Tonga’s single undersea internet cable and satellite internet connections, both of which may have been damaged.

-

One man’s journey into the QAnon conspiracy theory, and back out: A Brooklyn man named Justin spoke to NBC News about how his family, the violence of last year’s Capitol riot, and rejecting a blanket distrust of people helped him slowly pull away from conspiracy theories.

-

A government website that works! The U.S. Postal Service started taking online orders on Tuesday to ship free Covid tests to Americans’ homes. More than a million people went to the government website at one point on Tuesday, and it mostly worked fine, my colleagues Sheryl Gay Stolberg and Lola Fadulu report.

Related from 2021: To build trust in the government, it would help if the website works.

Hugs to this

This kiddo shoveling snow is exhausted (DEEP SIGH), and wants to tell you all about it.

We want to hear from you. Tell us what you think of this newsletter and what else you’d like us to explore. You can reach us at ontech@nytimes.com.

If you don’t already get this newsletter in your inbox, please sign up here. You can also read past On Tech columns.